First detailed mapping and modeling of thalamus inputs onto visual cortex neurons show brain leverages “wisdom of the crowd” to process sensory information.

David Orenstein | Picower Institute for Learning and Memory

February 2, 2023

The brain’s cerebral cortex produces perception based on the sensory information it’s fed through a region called the thalamus.

“How the thalamus communicates with the cortex in a fundamental feature of how the brain interprets the world,” says Elly Nedivi, the William R. and Linda R. Young Professor in The Picower Institute for Learning and Memory at MIT. Despite the importance of thalamic input to the cortex, neuroscientists have struggled to understand how it works so well given the relative paucity of observed connections, or “synapses,” between the two regions.

To help close this knowledge gap, Nedivi assembled a collaboration within and beyond MIT to apply several innovative methods. In a new study described in Nature Neuroscience, the team reports that thalamic inputs into superficial layers of the cortex are not only rare, but also surprisingly weak, and quite diverse in their distribution patterns. Despite this, they are reliable and efficient representatives of information in the aggregate, and their diversity is what underlies these advantages.

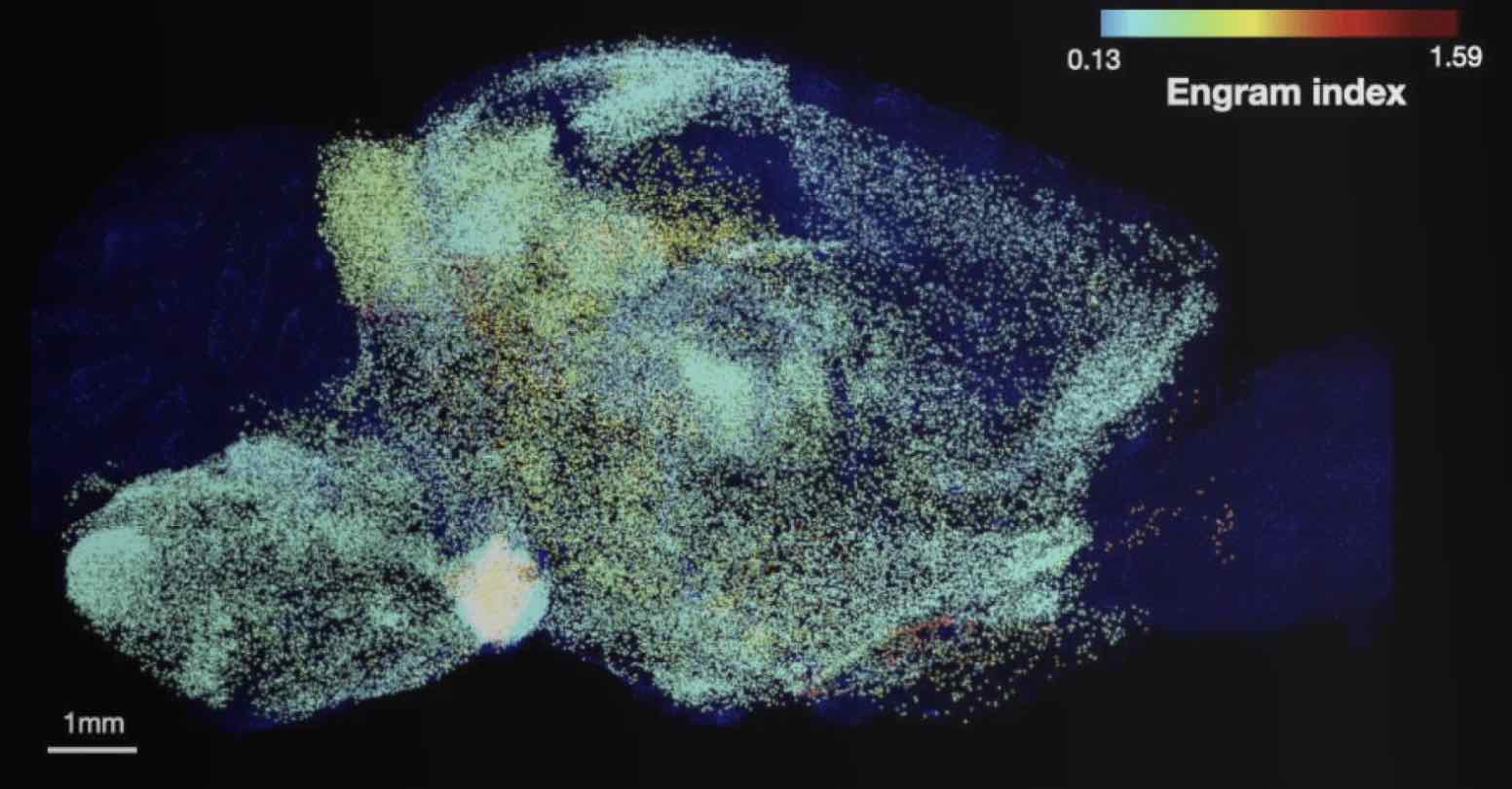

Essentially, by meticulously mapping every thalamic synapse on 15 neurons in layer 2/3 of the visual cortex in mice and then modeling how that input affected each neuron’s processing of visual information, the team found that wide variations in the number and arrangement of thalamic synapses made them differentially sensitive to visual stimulus features. While individual neurons therefore couldn’t reliably interpret all aspects of the stimulus, a small population of them could together reliably and efficiently assemble the overall picture.

“It seems this heterogeneity is not a bug; it’s a feature that provides not only a cost benefit, but also confers flexibility and robustness to perturbation” says Nedivi, corresponding author of the study and a member of MIT’s faculty in the departments of Biology and Brain and Cognitive Sciences.

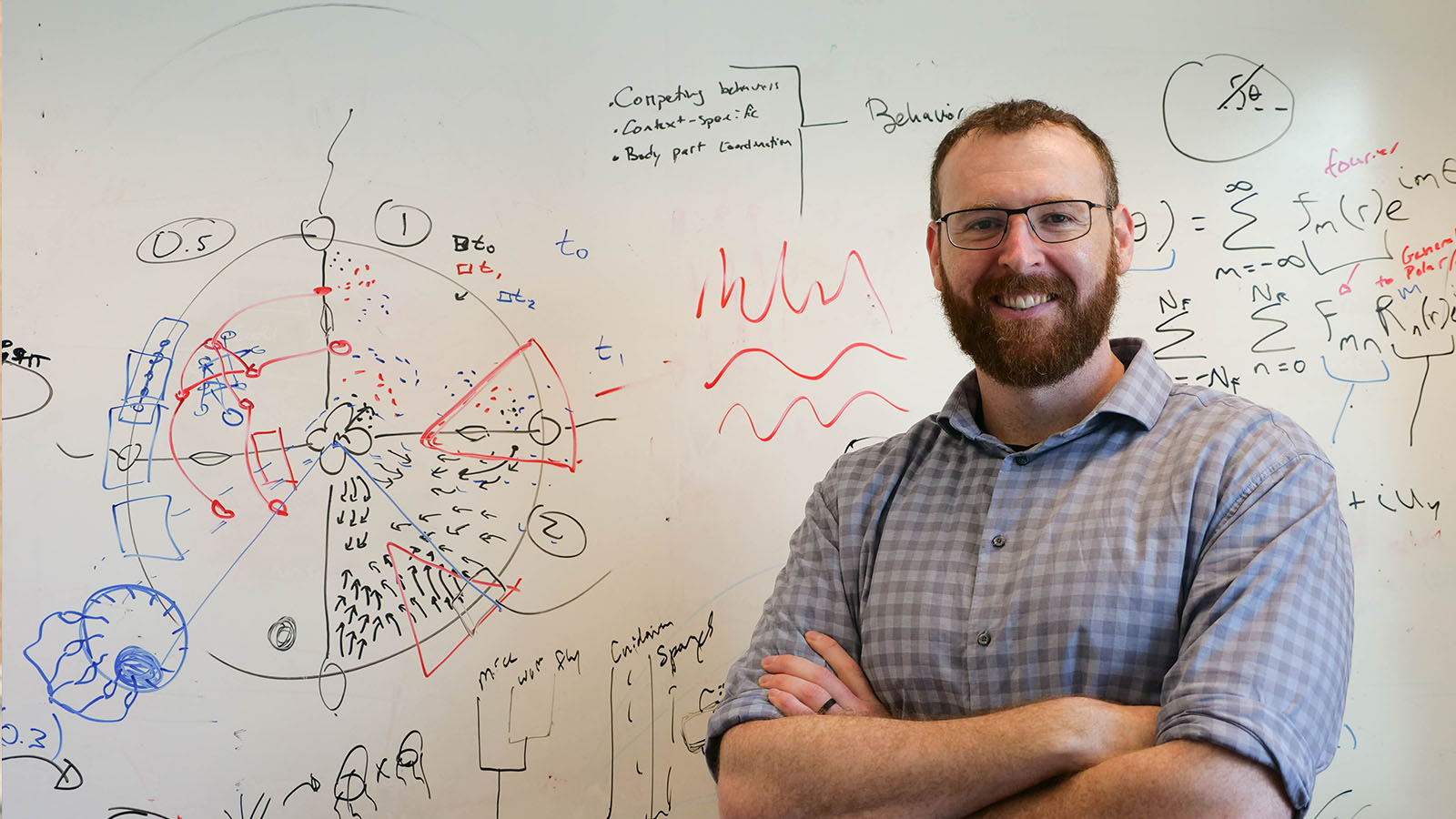

Aygul Balcioglu, the research scientist in Nedivi’s lab who led the work, adds that the research has created a way for neuroscientists to track all the many individual inputs a cell receives as that input is happening.

“Thousands of information inputs pour into a single brain cell. The brain cell then interprets all that information before it communicates its own response to the next brain cell,” Balcioglu says. “What is new, and we feel exciting, is we can now reliably describe the identity and the characteristics of those inputs, as different inputs and characteristics convey different information to a given brain cell. Our techniques give us the ability to describe in living animals where in the structure of the single cell what kind of information gets incorporated. This was not possible until now.”

“MAP”ping and modeling

Nedivi and Balcioglu’s team chose layer 2/3 of the cortex because this layer is where there is relatively high flexibility, or “plasticity,” even in the adult brain. Yet, thalamic innervation there has rarely been characterized. Moreover, Nedivi says, even though the model organism for the study was mice, those layers are the ones that have thickened the most over the course of evolution, and therefore play especially important roles in the human cortex.

Precisely mapping all the thalamic innervation onto entire neurons in living, perceiving mice is so daunting it’s never been done.

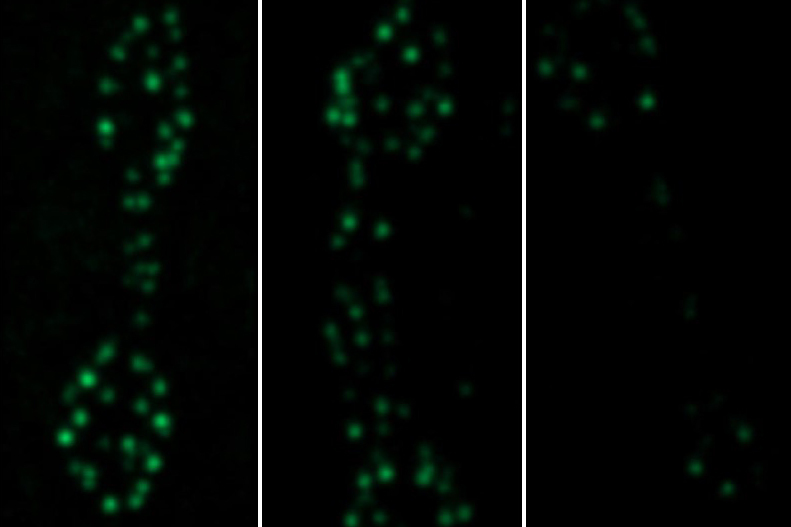

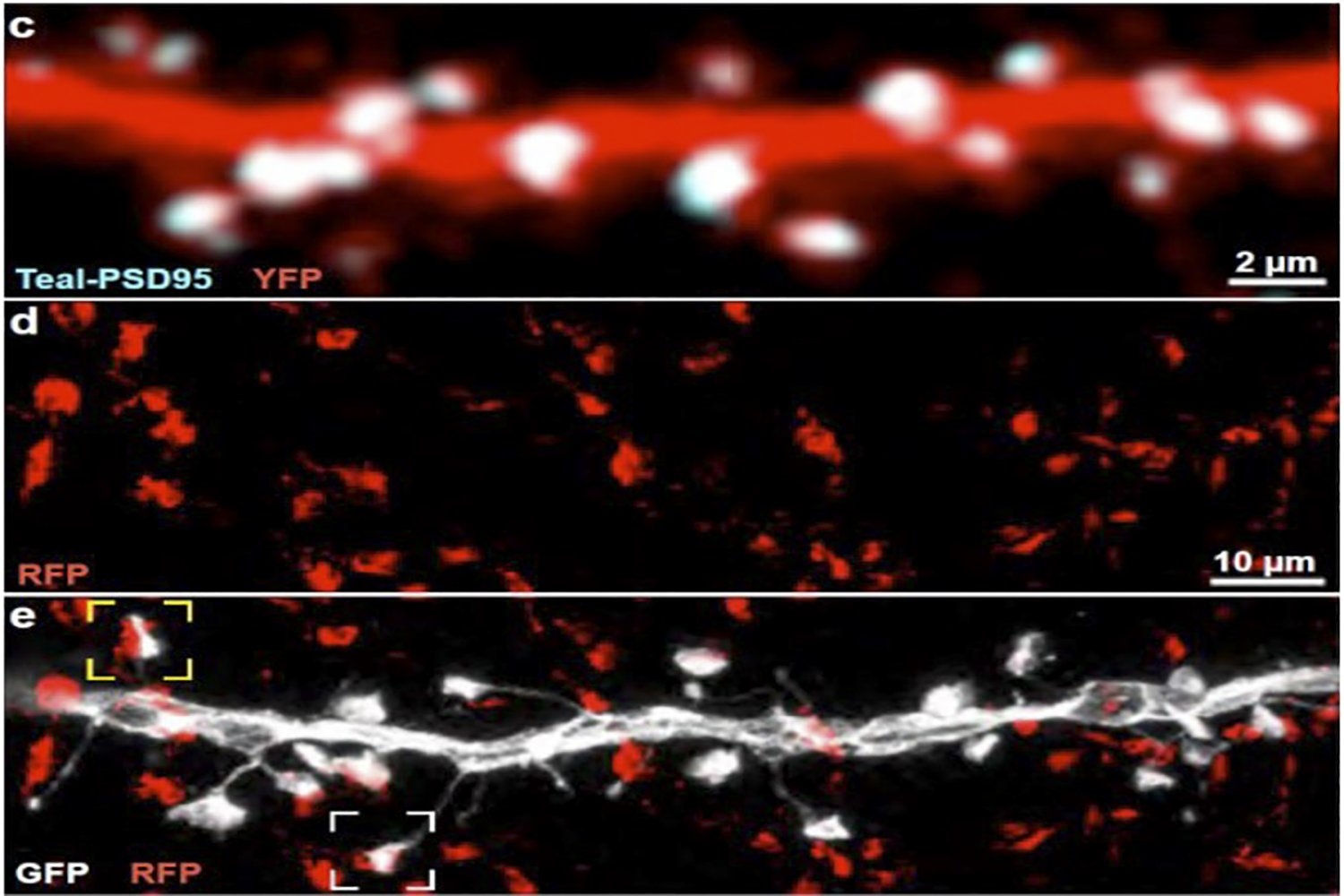

To get started, the team used a technique established in Nedivi’s lab that enables observing whole cortical neurons under a two-photon microscope using three different color tags in the same cell simultaneously, except in this case they used one of the colors to label thalamic inputs contacting the labeled cortical neurons. Wherever the color of those thalamic inputs overlapped with the color labeling excitatory synapses on the cortical neurons, that revealed the location of putative thalamic inputs onto the cortical neurons.

Two-photon microscopes offer deep looks into living tissues, but their resolution is not sufficient to confirm that the overlapping labels are indeed synaptic contacts. To confirm their first indications of thalamic inputs, the team turned to a technique called MAP invented in the Picower Institute lab of MIT chemical engineering Associate Professor Kwanghun Chung. MAP physically enlarges tissue in the lab, effectively increasing the resolution of standard microscopes. Rebecca Gillani, a postdoc in the Nedivi lab, with help from Taeyun Ku, a Chung Lab postdoc, was able to combine the new labeling and MAP to definitely resolve, count, map, and even measure the size of all thalamic-cortical synapses onto entire neurons.

The analysis revealed that the thalamic inputs were rather small (typically presumed to also be weak and maybe temporary), and accounted for between 2 and 10 percent of the excitatory synapses on individual visual cortex neurons. The variance in thalamic synapse numbers was not just at a cellular level, but also across different “dendrite” branches of individual cells, accounting for anywhere between zero and nearly half the synapses on a given branch.

“Wisdom of the crowd”

These facts presented Nedivi’s team with a conundrum. If the thalamic inputs were weak, sparse, and widely varying, not only across neurons but even across each neuron’s dendrites, then how good could they be for reliable information transfer?

To help solve the riddle, Nedivi turned to colleague Idan Segev, a professor at Hebrew University in Jerusalem specializing in computational neuroscience. Segev and his student Michael Doron used the Nedivi lab’s detailed anatomical measurements and physiological information from the Allen Brain Atlas to create a biophysically faithful model of the cortical neurons.

Segev’s model showed that when the cells were fed visual information (the simulated signals of watching a grating go past the eyes) their electrical responses varied based on how their thalamic input varied. Some cells perked up more than others in response to different aspects of the visual information, such as contrast or shape, but no single cell revealed much about the overall picture. But with about 20 cells together, the whole visual input could be decoded from their combined activity — a so-called “wisdom of the crowd.”

Notably, Segev compared the performance of cells with the weak, sparse, and varying input akin to what Nedivi’s lab measured, to the performance of a group of cells that all acted like the best single cell of the lot. Up to about 5,000 total synapses, the “best” cell group delivered more informative results, but after that level the small, weak, and diverse group actually performed better. In the race to represent the total visual input with at least 90 percent accuracy, the small, weak, and diverse group reached that level with about 6,700 synapses, while the “best” cell group needed more than 7,900.

“Thus heterogeneity imparts a cost reduction in terms of the number of synapses required for accurate readout of visual features,” the authors wrote.

Nedivi says the study raises tantalizing implications regarding how thalamic input into the cortex works. One, she says, is that given the small size of thalamic synapses they are likely to exhibit significant “plasticity.” Another is that the surprising benefit of diversity may be a general feature, not just a special case for visual input in layer 2/ 3. Further studies, however, are needed to know for sure.

In addition to Nedivi, Balcioglu, Gillani, Ku, Chung, Segev and Doron, other authors are Kendyll Burnell and Alev Erisir.

The National Eye Institute of the National Institutes of Health, the Office of Naval Research, and the JPB Foundation funded the study.